In an eerie new study, scientists have analyzed the brain activity of mice and used it to reconstruct videos of what the mice had seen.

The team, led by researchers at University College London (UCL), measured which individual neurons were firing in the visual cortex of mice, then ran the data through an algorithm that recreated videos the mice had seen, pixel by pixel.

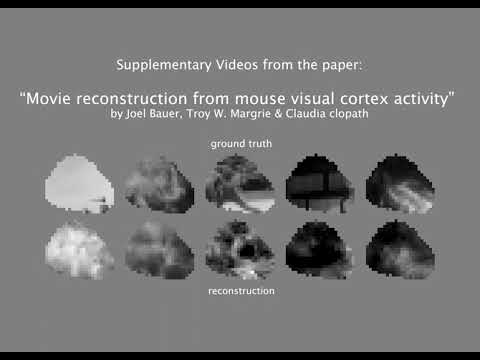

"Using this approach, we were able to achieve high-quality reconstructions of 10-second video clips," says Joel Bauer, neurobiologist at UCL and lead author of a study describing the work.

"The accuracy of the reconstructions improved with the inclusion of data from more individual neurons, demonstrating the importance of comprehensive neural data."

While it's a far cry from a pixel-perfect, HD remake of what the mouse did yesterday, it is eerie to see the similarities pulled out of pure neuronal data.

The researchers started with a dynamic neural encoding model developed by another team. This system predicts which neurons will fire in response to specific video inputs. It even accounts for the behavior of the mice while watching the videos, which includes things like their running speed and the position and diameter of their pupils.

From that data, scientists can work backwards to recreate a general representation of the original video by analyzing which neurons are firing.

The previous work achieved a correlation of 0.301 between the predicted and "ground truth" neuronal activity.

To improve the accuracy of the recreations in the new study, the team retrained seven versions of the model using a blank grey screen as a baseline. They then calculated the difference in neuronal activity between what it would look like if they were seeing a blank screen and what it actually looked like.

From this, the scientists could update the blank screen pixel by pixel, until the finished video resembled what the mouse had originally seen.

After the models were trained, the researchers showed five mice a brand-new 10-second video that the models hadn't been specifically trained on.

The test animals' neuronal activity was enough to reconstruct the video more accurately than the previous model. In this case, the researchers achieved a correlation of up to 0.569 between the original and reconstructed videos.

Success varied for different videos, but the best results depended on the timing (in the changing of the pixels) of the two videos.

The resolution and coverage of the reconstructions weren't quite as successful as the timing, but the team plans to focus on improving these in future work.

The idea of 'reading' someone's mind and extracting a visual representation of what they've seen is a little alarming in a world where individual privacy has already been drastically eroded.

Previous research has used EEG caps to translate human participants' thoughts into text with a creepy degree of accuracy, while another used fMRI scans to figure out the basic story that participants had heard in a podcast.

The researchers behind the new study, however, say that the main purpose of the work is to investigate just how the brain processes visual information from the eyes, and how that differs from what's actually there.

Related: Brain Scans Reveal When You'll Change Your Mind – Before You Do

"We don't have a perfect representation of the world in our heads. The visual processing pipeline skews and warps our representation in a way that modifies information," says Bauer.

"This deviation between reality and representations in the brain is not necessarily an error but a feature, reflecting how our minds interpret and augment sensory information. We want to explore how this happens in the brain."

The research was published in the journal eLife.

.jpg) 2 hours ago

2

2 hours ago

2

English (US)

English (US)